CS21 Lab4: CUDA Fire Simulator

Here are the lab groups (I think):

| Lab 3 Partners | ||

| Sam and Niels | Chloe and Kyle | |

| Jordan and Luis | Steven and Elliot | |

| Nick and Phil | Katherine and Ames | |

| See the git howto for information about how you can set up a git repository for your joint lab 2 project. | ||

This lab is designed to give you some practice designing, writing and running CUDA programs.

Contents:

Programming in CUDA and Getting Started

Project Details

Project Requirements

Getting Started

Useful Functions and Links to more Resources

Submission and Demo

Getting Started

I suggest starting by looking at and running my simple example that uses myopengllib to simultaneously animate a CUDA application on the GPU.cp ~newhall/public/cs87/cuda_animation_example/* .The header file in this directory has comments about how to use the library. simple.cu is an example program that uses the library to animate a simple cuda kernel where values in a 2-D grid are cyclically updated.

Next, I encourage you to do some reading about CUDA programming. We have a copy of "CUDA by Example" in the main lab, which is an excellent reference. There are also links to on-line CUDA programming references and tutorials in the "Useful Resources" section below. We also have the CUDA SDK example programs on our system that you can look over and try running:

The CUDA SDK application sources are here: /usr/local/CUDA_SDK/C/src/ You can run binaries from here: /usr/local/CUDA_SDK/C/bin/linux/release/Some of these examples use features of CUDA that you are beyond what you need to use in this lab, so don't get too bogged down in slogging through these.

The Programming Model in CUDA

The CUDA programming model consists of a global shared memory and a set of multi-thread blocks that run in parallel. CUDA has very limited support for synchronization (only threads in the same thread block can synchronize their actions). As a result, CUDA programs are often written as purely parallel CUDA kernels that are run on the GPU, where code running on the CPU implements the synchronization steps: CUDA programs often have alternating steps of parallel execution on the GPU and sequential on the CPU.A typical CUDA program may look like:

- The initialization phase and GPU memory allocation and copy phase: CUDA memory allocated is allocated on the GPU by calling cudaMalloc. Often program data are initialized on the CPU in a CPU-side copy of the data in RAM, and then copied to the GPU using cudaMemcpy. GPU data can also be initialized on the GPU using a CUDA kernel, and then the cudaMemcpy does not need to be done. For example, initializing all elements in an array to 0 can be done very efficiently on the GPU.

- A main computation phase, that consists of one or more calls to cuda kernel functions. This could be a loop run on the CPU that makes calls to one or more CUDA kernels to perform sub-steps of the larger computation. Because there is almost no support for GPU thread synchronization, CUDA kernels usually implement the parallel parts of the computation and the CPU side the synchronization events. An embarrassingly parallel application could run as a single CUDA kernel call.

- There may be a sequential output phase where data are copied from the GPU to the CPU, using cudaMemcpy, and output in some form.

- A clean-up phase where CUDA and CPU memory is freed. cudaFree is used to free GPU memory allocated with cudaMalloc

GPU functions in CUDA

__global__ functions are CUDA kernel functions: functions that are called from the CPU and run on the GPU. They are invoked using this syntax:

my_kernel_func<<< blocks, threads >>>(args ...);

__device__ functions are those that can be called only from other

__device__ functions or from __global__ functions.

They are for good modular code design of the GPU-side code. They are called using

a similar syntax as any C function call. For example:

__global__ my_kernel_function(int a, int *dev_array) {

int offset = blockIdx.x + blockIdx.y*gridDim.y;

int max = findmax(a, dev_array[offset]);

...

}

__device__ findmax(int a, int b) {

if(a > b) {

return a;

}

return b;

}

Memory in CUDA

GPU memory needs to be explicitly allocated (cudaMalloc), if initial values for data are on CPU, then these need to be copied to GPU side data (cudaMemcpy), and explicitly freed (cudaFree). When you program in CUDA you need to think carefully about what is running on the CPU on data stored in RAM, and what is running on the GPU on data stored on the GPU. Memory allocated on the GPU (via cudaMalloc) stays on the GPU between kernel calls. If the CPU wants intermediate or final results, they have to be explictly copied from the GPU to CPU.In CUDA all arrays are 1-dimensional, so each parallel thread's location in the multi-dimensional thread blocks specifying the parallelism, needs to be explicitly mapped onto offsets into CUDA 1-dimensional arrays. Often times there is not a perfect 1-1 thread to data mapping and the programmer needs to handle this case to not try to access invalid memory locations beyond the bounds of an array (when there are more threads than data elements), or to ensure that every data element is processed (when there are fewer threads than data elements).

For this lab, you can assume that if you use a 2-D layout of blocks

(dim3 blocks(DIM,DIM)) that there are enough GPU threads to each handle a

single element in a 500x500 array.

- part of a LAKE

- part of a forest that is UNBURNED

- part of a forest that is BURNING

- part of a forest that has already BURNED

In addition to a cell being in these different states, associated with each cell is its temperature. A cell's temperature range depends on its state:

- 60 degrees for UNBURNED forest cells

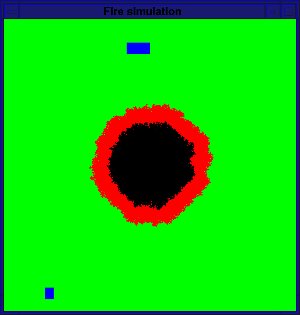

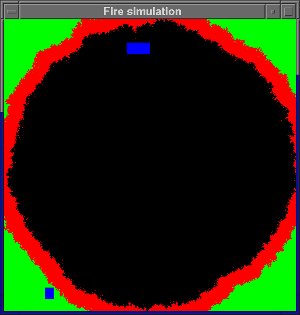

- 300 to 1000 to 60 for a BURNING forest cell. A burning cell goes through increasing and decreasing temperatures phases. It starts at the ignition temperature of 300 degrees and increase up to a max of 1000 degrees. Once it reaches 1000 degrees its temperature starts decreasing back down to 60 degrees, at which point it becomes BURNED.

- X degrees for a BURNED cell: you can pick a temperature, but pick one that no UNBURNED or BURNING forest cell can ever be.

- Y degrees for a LAKE cell: you can pick a temperature, but pick one that no forest cell can be.

Your simulator should take the following command line arguments (all are optional arguments):

./firesimulator {-i iters -d step -p prob | -f filename}

-i iters the number of iterations to run

-d step the rate at which a burning cell's temp increases or decrease at each step

-p prob the probability that a cell will catch fire if one of its neighbors is burning

-f filename read in configuration info from a file

Your program should using default values for any of values not given as command line arguments. Use 1,000 iterations, a step size of 20, and a probability of 0.2 as the default values.

Options -i, -d and -p are not compatible with -f. The file format is discussed below.

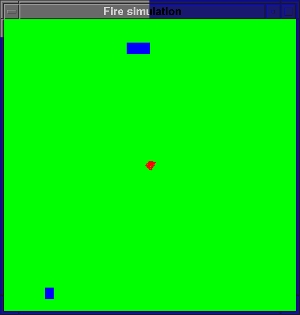

Initialize your world to some default configuration (unless the -f command line is given). The default should start a fire in the center of the world (just a single cell...like a lightning strike). It should also contain a couple lakes (contiguous regions of some size of lake cells).

After simulating the given number of steps your program should print out the cumulative GPU run time and exit. At each time step, a cell's state and/or temperature may change according to these rules:

- if a cell is a LAKE, it stays a LAKE

- if a cell is BURNED, it stays BURNED forever

- if a cell is UNBURNED, then it either starts on fire or stays UNBURNED.

To decide if an UNBURNED cell starts on fire:

- look at the the state of its immediate neighbors to the north, south, east and west. The world is not a torus, so each cell has up to 4 neighbors, edge cells have only 2 or 3 neighbors.

- if at least one neighboring cell is on fire, then the cell will catch fire with a probability passed in on the command line (or use 10% as the default probability).

- if a cell is BURNING, then it burns at a constant rate for some number

of time steps. However, its temperature first increases from 300 (the

fire igniting temp) up to 1000 degrees, and then it decreases from 1000 back

down to 60 degrees, at which point it becomes a BURNED cell.

The rate at which its temperature increases or decreases is given by a command line argument -d, or use a default value of 20.

A BURNING cell's state may change based on its new temperature: if its new temperature is <= 60, then this cell is now done burning and its state is now BURNED. Its temperature is set to the BURNED temperature value that you use.

Input file format

If run with an input file (the -f command line option), the program configuration values are all read in from the file. The file's format should be:line 1: number of iterations line 2: step size line 3: probability line 4: the lightning strike cell (its (i,j) coordinates) line 5: number of lakes line 6-numlakes+6: lines of (i,j) coordinate pairs of the upper left corner and lower right corner of each rectangular lakeThe lake coordinates are given in terms of the 2-D array of cell values that you initialize on the CPU. All cells specified in that rectangle should be lake cells, all others should be forest cellls. For example:

500 40 0.3 250 400 2 20 30 50 70 100 60 120 110This will run a simulation for 500 interations, with a temperature step size of a 40 degree increase or decrease, and with a probability of 30%. It will start with an initail world containing 2 lakes one with upper left corner at (20,30) and lower right at (50,70), the other with upper left corner at (100,60) and lower right at (120, 110). All other cells will be UNBURNED forest cells, except cell (250,400) which will start as BURNING. It is fine if the lakes overlap; the lakes in the world from my example simulation would look less rectangular if I overlapped several lake rectangles.

- The size of the grid can be compiled into your program (i.e. you do not need to dynamically allocate space for the CPU-side grid). Instead, just statically declared 2 dimensional array of 500x500 int values on the CPU side that you use to initialize to the starting point values for your fire simulator. You should, of course, use a constant instead of 500 in your code so you can easily try other size worlds.

- The 2D forest you are simulating is NOT a torus; there is no wrap-around for neighbors on edge points.

- Each cell's value changes based on its current state, and possibly the state of its up to 4 neighboring cells (north, south, east, and west).

- Your program should take optional command line arguments for

the number of iterations to run the simulation, the probability a

cell catches fire if one or more of its neighbors are on fires, and

the rate at which a cell on fire's temperature increases or decreases

each time-step. For example, to run for 500 time steps, using

a probability of 20% and a temperature step of 50 do:

./firesimulator -i 500 -p 0.2 -d 50Because all of these arguments are optional, you should use default values of 1000 time steps, 10%, and 20 degrees for these values. - Your program should also support an optional command line argument for

reading in world configuration information from a file (-f is not

compatable with -i, -p or -d).

./firesimulator -f infile - Your program should contain timers to time the GPU parts of the computation and output the total time after all iterations have been complete.

- At each step, you should color the display pixels based on each cell's state (or temperature). I'd recommend starting with something simple like green for UNBURNED, red for BURNING, black for BURNED, and blue for LAKE. You are welcome to try something more complicated based on actual temperature, but this is not required.

- After the specified number of iterations your program should print out the total CUDA kernel time, and should exit (just call exit(0));

- You must use a 2-D grid of blocks layout on the GPU to match

the 2-D array that is being modeled:

dim3 blocks(DIM, DIM);

note: A 1-D grid of 500x500 blocks is too big for most, possibly all, of our graphics cards. If you don't use a 2-D grid, you program will not work and you will see some very strange behavior. To see the graphics card specs our machines look at lab machine specs. - You should run your kernels as DIMxDIM blocks, each with a single

thread:

my_kernel_func<<< blocks, 1 >>>(args ...);As an extension you can try some number of blocks of 16x16 threads, but don't try this until you have the above working first. - Your program should use myopengllib to visualize your simulation

as it runs on the GPU. See my comments in myopengllib.h and simple.cu

for information about how to use this library. You can grab these

from here:

~newhall/public/cs87/cuda_animation_example/

- CUDA programming Links and Resources

- Information about how to use my simple GPU animation library to

simultaneously visualize your fire simulator as it runs is described

in the comments in the library .h file and in the simple example

program, simple.cu, that uses it. Copy over this code, examine it,

and run it to see what it is doing.

cp ~newhall/public/cs87/cuda_animation_example/* .

- lab machine graphics card specs

- run deviceQuery to get CUDA stats about a GPU on

particular machine. It will list the limits on block and thread

size, and the GPU memory size among other information.

- timing CUDA code: to time the GPU part of your program's

execution, you need to create start and stop variables of type

cudaEvent_t, start them, stop them, and compute the elapsed time

using their values. To do this you will need to use functions

cudaEventCreate, cudaEventRecord, cudaEventSynchronize, cudaEventElapsedTime, and cudaEventDestroy.

- Random numbers in CUDA

Random number generators are inherently sequential: they generate

a sequence of pseudo random values. It is inherently more complicated

to generate pseudo random sequences in parallel.

Depending on how your program wants to use random values, you may need

to create separate random state for each thread that each thread

uses to generate its own random sequence. Seeding each thread's

state differently will ensure that threads are not generating identical

random sequences.

The cuRAND library provides an interface for initializing random number generator state, and using that state to generate random number sequences. The following are the steps necessary for using cuRAND to generate random numbers in your program (you will use these to calculate the chance that a cell will catch fire if one or more of its neighbors is on fire):

- include curand headers

#include <curand_kernel.h> #include <curand.h>

- allocate curandState for every CUDA thread:

curandState *dev_random; HANDLE_ERROR(cudaMalloc((void**)&dev_random, sizeof(curandState)*N*N), "malloc dev_random") ;

- write a CUDA kernel to initialize the random state (each thread will

initialize its own state on the GPU):

// CUDA kernel to initialize NxN array of curandState, each // thread will use its own curandState to generate its own // random number sequence __global__ void init_rand(curandState *rand_state) { int row, col, offset; row = blockIdx.x; col = blockIdx.y; offset = col + row*gridDim.x; if(row < N && col < N) { curand_init(hash(offset), 0, 0, &(rand_state[offset])); } } // a hash function for 32 bit ints // (from http://www.concentric.net/~ttwang/tech/inthash.htm) __device__ unsigned int hash(unsigned int a) { a = (a+0x7ed55d16) + (a<<12); a = (a^0xc761c23c) ^ (a>>19); a = (a+0x165667b1) + (a<<5); a = (a+0xd3a2646c) ^ (a<<9); a = (a+0xfd7046c5) + (a<<3); a = (a^0xb55a4f09) ^ (a>>16); return a; } - Call init_rand before calling any cuRAND library functions that

use curandState:

init_rand<<<blocks,1>>>(dev_random)

- Now CUDA threads can generate random numbers on the GPU using there

own initialized state:

__global__ void use_rand_kerenl(curandState *rand_state, float prob) { int offset = ... // compute offset base on this thread's position in parallelization // get a random value uniformly distributed between 0.0 and 1.0 using my curandState val = curand_uniform(&(rand_state[offset]));

- include curand headers

If you implement some extensions to the basic simulator, please do so in a separate .cu file and build a separate binary so that I can still easily test your solution to the required parts of the lab assignment.

The required fire simulator is fairly simplistic and perhaps not implemented in the most efficient manner. Here are a few suggestions for some things to try to improve one or both of these (you are also welcome to come up with your own ideas for extensions):

- you could add wind direction and speed to your world data and this information in combination with neighbor's state will determine if a cell catches fire at each step. For example, if a cell is up-wind from a neighbor on fire, its chances of catching fire will be much less than if it id down-wind from a neighbor on fire.

- You could add elevation data to each cell, and use a cell's elevation in combination with other data in determining its likelihood of catching fire. Fire might be more likely to move up in elevation than down...I don't know if this is true, but it seems plausible. If you add elevation data, you should color UNBURNED cells differently based on their elevation so you can "see" the elevation data.

- You could populate the area with different types of UNBURNED forest vegetation, associating with each cell its vegetation type. This can be used in combination with other data in determining how likely a cell is to catch fire, and its burn rate and max temp. For example, prairie may be more likely to catch fire, than pine forest, than deciduous forest, than marsh. Again, you should color different types of vegetation differently so that you can see the different types.

- You could model more realistic burning functions (they are likely not linear in real life).

- Try out different parallelizations. It is unlikely that NxN block of one thread each yields the fastest parallelization. Instead, try multi-threaded blocks. One suggestion is some number of 16x16 thread blocks (this size should work on all our systems).

- Try larger world sizes that require breaking the paraliazation up more into blocks of thread blocks and require each thread mapping to more than a single grid cell. See how big you can go (you may want to think about adding a comand line option for the size and some dynamic memory allocation to more easily test this).

- All your source files, and makefile to build.

- A README file with: (1) you and your partner's names; (2) if you have a version with extra extensions, an example of how to run your extended fire simulator (a command line); and (3) a description of any features you have not fully supported and/or any errors you were unable to fix.

One of you or your partner should submit your tar file by running cs87handin.

If cs87handin is not working, you can email me your tar file or email me and tell me where in your file system your tar file is and I'll copy it out of there (don't modify the tar file after the due date). Here is one way to email it to me (you could also log into swatmail in firefox and send it to me as an attachment):

mail newhall < lab4.tar