Use Teammaker to form your team. You can log in to that site to indicate your partner preference. Once the lab is released and you and your partner have specified each other, a GitHub repository will be created for your team.

The objectives of this lab are to:

You will implement the following local search methods:

You will need to modify the following python files:

./LocalSearch.py HC TSP coordinates/South_Africa_10.json ./LocalSearch.py SA VRP coordinates/India_15.json ./LocalSearch.py BS VRP coordinates/United_States_25.json -config my_config.json -plot USA25_map.pdf

NOTE: In order to execute python files directly (as shown above, without having to type python3), you'll neeed to make the file executable first. Type the following at the unix prompt to change the file's permissions:

chmod u+x LocalSearch.py

The first example runs hill climbing to solve a traveling salesperson problem on the 10-city South Africa map. The second example runs simulated annealing to solve a vehicle routing problem on the 15-city India map. The third example runs stochastic beam search, and demonstrates the non-required options, which allow you to load a non-default parameter file and/or save the resulting map to a non-default output file.

Local search is typically used on hard problems where informed search is not viable (for example both TSP and VRP are NP-complete problems). Thus the search often runs for an extended period of time. It is important to keep the user informed of the search's progress. You should print informative messages during the search execution. For instance, you should inform the user whenever the best value found so far is updated along with the current search step at that point.

A traveling salesperson tour visits each of a collection of cities once before returning to the starting point. The objective is to minimize the total distance traveled. We will represent a tour by listing cities in the order visited. Neighbors of a tour are produced by moving one city to a new position in the ordering.

A vehicle routing plan sends several vehicles on round trip tours such that at least one vehicle visits each city, and all vehicles eventually return to the starting point. The general goal is to minimize travel distance. We represent a plan as a list of tours that all have the same starting point. Neighboring plans are ones where a single city has been reassigned (to a different vehicle and/or a different position in the tour).

The class TSP in the file TSP.py and the class VRP in the file VRP.py represent instances of each problem. Both of these classes provide several useful methods:

Instances of both TSP and VRP are constructed by passing in the name of a JSON file specifying the latitude and longitude of locations that must be visited. Example JSON files are located in the coordinates/ directory. The __init__() method also takes additional parameters read from a separate JSON file. The default file for these parameters is the file default_config.json.

The TSP and VRP classes leave the following methods for you to implement:

Note that most of these method names begin with a single underscore. This is a python convention for indicating a 'private' method. Python doesn't actually distinguish between private and public class members, but the underscore lets programmers know that the method isn't intended to be called directly. In this case, your local search functions should not call these methods. Instead, they should call TSP.get_neighbor(), or VRP.cost(), which will point to one of your functions based on the parameters from the config file. TSP.random_neighbor() does not begin with an underscore because the local search functions will sometimes need to call it directly.

You are strongly encouraged to incrementally test your code as you develop it. The best way to do this is to add tests inside the if at the bottom of the files TSP.py and VRP.py:

if __name__ == '__main__':

...

You should generate instances of each class and try out their methods.

candidate = problem.random_candidate() cost = problem.cost(candidate) return candidate, costThis will allow you to run hill climbing without generating errors:

./LocalSearch.py HC TSP coordinates/United_States_25.jsonDoing so will print the random candidate and its cost, and will also create a file that plots the tour in latitude/longitude coordinates. By default, the plot is saved in the file map.pdf, but you can change this with the -plot command line argument:

./LocalSearch.py HC TSP coordinates/India_15.json -plot random_India_tour.pdfYour next job is to implement the following hill climbing algorithm. This is a slightly different version than what we discussed in class. With some probability it will take a random move, and it will always execute the requested number of steps, keeping track of the best state found so far.

initialize best_state, best_cost = (None, float("inf"))

loop over runs

current_state = random candidate

current_cost = cost(state)

loop over steps

if random move

current_state, current_cost = random neighbor of current_state

else

neighbor_state, neighbor_cost = get neighbor of current_state

if neighbor_cost < current_cost:

update current_state, current_cost

if current_cost < best_cost

update best_state, best_cost

print status information about new best state and cost

return best_state, best_cost

Note that computing the cost of a tour takes non-trivial time, so whenever possible, you should save the value rather than making another function call.

Several of the parameters used above are specified in a config file.

By default, LocalSearch.py reads default_config.josn.

You should copy this file and modify it, passing in the new file via the -config command line argument:

cp default_config.json my_config.jsonThen edit the "HC" portion of my_config.json as well as the "TSP" and "VRP" portions with whatever defaults you'd like to use. Then you can re-run the local search based on your default selections.

./LocalSearch.py HC VRP coordinates/South_Africa_10.json -config my_config.json

SimulatedAnnealing.py is set up much like HillClimbing.py. Your next task is to implement the algorithm that we discussed in class on Wednesday.

initialize best_state, best_cost = (None, float("inf"))

loop over runs

initialize temp

current_state = random candidate

current_cost = cost(state)

loop over steps

candidate_state, candidate_cost = random neighbor of current state

delta = current_cost - candidate_cost

if delta > 0 or with probability e^(delta/temp)

current_state = candidate_state

current_cost = candidate_cost

if current_cost < best_cost

best_state = current_state

best_cost = current_cost

temp *= temp_decay

return best_state, best_cost

Edit the "SA" portion of the config file to set the defaults for simulated annealing. The init_temp specifies the starting temperature, and temp_decay gives the geometric rate at which the temperature decays. The runs and steps parameters have the same interpretation as in hill climbing: specifying how many random starting points simulated annealing should be run from, and how long each run should last.

Your final implementation task is to write stochastic beam search as discussed in class Friday.

initialize best_state, best_cost = (None, float("inf"))

initialize population with pop_size random candidates

initialize temp

loop over runs

generate max_neighbors candidates for each individual in pop

find overall best_neighbor_state, best_neighbor_cost

if best_neighbor_cost < best_cost

update best_state and best_cost

generate probabilities for selecting candidates based on costs:

compute costs of neighbors

compute e^(-cost/temp) for neighbors

normalize each by the sum

use normalized values as sampling probabilities

(NOTE: be sure to catch the ZeroDivisionError that may occur if

probabilities get too small)

pop = pop_size candidates selected by sampling

temp *= temp_decay

return best_state, best_cost

Stochastic Beam search uses the same temperature and steps parameters as simulated annealing. However, note that the algorithm uses the temperature somewhat differently. Additionally, instead of starting several separate runs, beam search maintains a population of candidates at each step. The pop_size parameter specifies the number of candidates.

Stochastic beam search considers neighbors of all individual candidates in the population and preserves each with probability proportional to its temperature score. It is recommended that you add a helper function for beam search that evaluates the cost of each neighbor and determines its temperature score, then randomly selects the neighbors that will appear in the next iteration. Remember to avoid excess calls to cost by saving the value whenever possible.

For the larger maps, the number of neighbors grows quite large, making it impractical to evaluate every neighbor of every candidate. Your helper function should therefore consider only a random subset of each individual's neighbors. The size of this subset is given by the max_neighbors parameter in the "BS" section of the config file.

A very useful python function for stochastic beam search is numpy.random.choice. This function takes a list of items to choose from, a number of samples to draw, and optionally another list representing a discrete probability distribution over the items. It returns a list of items that were selected. Here is an example:

import numpy.random items = ["a", "b", "c", "d", "e"] indices = list(range(len(items))) probs = [0.1, 0.1, 0.2, 0.3, 0.3] for i in range(5): choices = numpy.random.choice(indices, 10, p=probs) print(choices)

In this particular example, items "d" and "e" have the highest probability of being selected (30 percent chance each), and they are at indices 3 and 4. You can see in the sample results below that indices 3 and 4 are selected more often on average than indices 0, 1, and 2.

[3 2 2 2 4 3 4 4 4 4] [3 4 2 4 4 4 4 4 4 3] [4 4 4 3 4 2 3 0 3 2] [4 2 3 0 1 0 3 4 4 2] [4 0 1 4 4 2 4 0 4 3]

Unfortunately, numpy will treat a list of Tours as a multidimensional array, so it is better to select indices (as demonstrated above) rather than choosing from a list of neighbors directly.

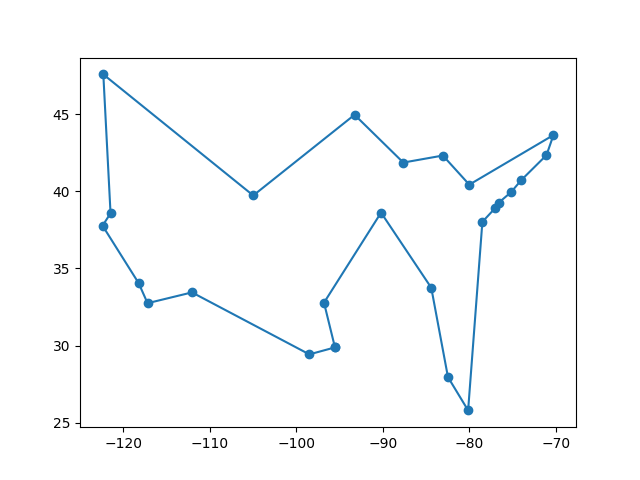

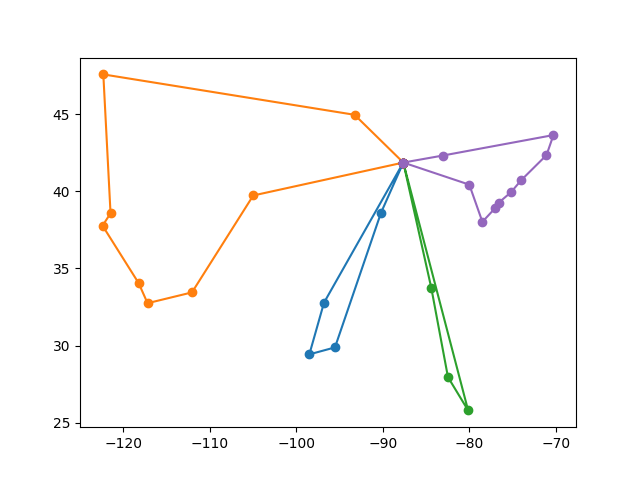

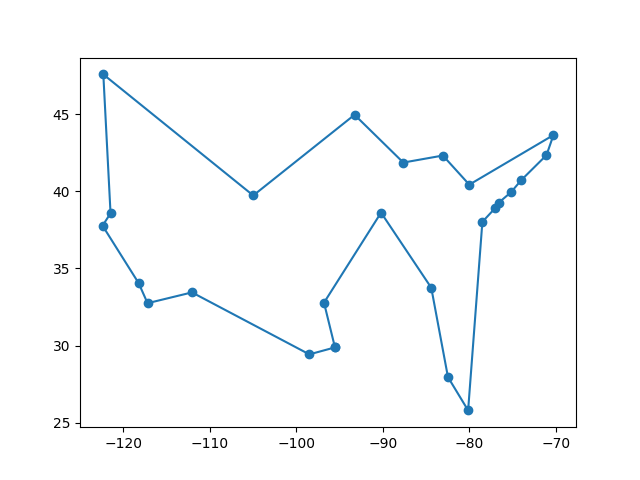

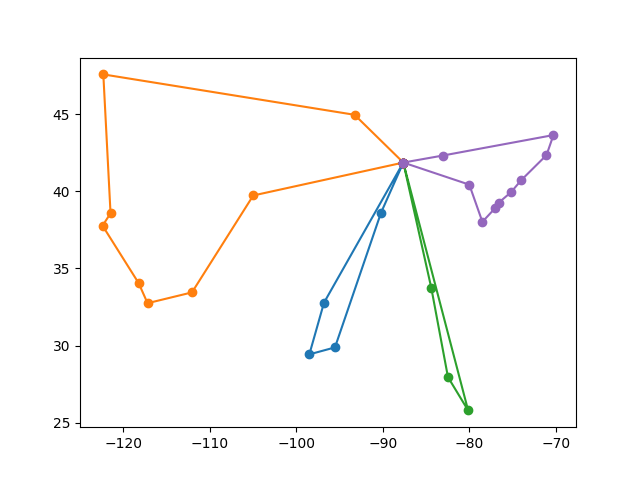

After all three of your local search algorithms are complete and working correctly, do some informal comparisons between the algorithms on one of the harder TSP problems, such as North_America_75.json. Be sure to spend a little time trying to tune the parameters of each algorithm to achieve its best results. If you find any particularly impressive tours, save them by renaming the file map.pdf to a more informative name.

In the file called summary.md, fill in the tables provided with your results and explain which local search algorithm was most successful at solving TSP problems.