If you want to work alone do:

setup63-Lisa labs/07 noneIf you want to work with a partner, then one of you needs to run the following command while the other one waits until it finishes:

setup63-Lisa labs/07 partnerUsernameOnce the script finishes, the other partner should run it on their account.

cd ~/cs63/labs/07 cp -r /home/meeden/public/cs63/labs/07/* .

git add * git commit -m "lab7 start" git push

cd ~/cs63/labs/07 git pull

In this lab, you will be exploring sequential decision problems that can be modeled as Markov Decision Processes (or MDPs). You will begin by experimenting with some simple grid worlds and then once you have gained some intuition about how these work, you will focus on Pac-Man.

You will start by testing Value Iteration agents that have access to the MDP model. Then you will look at Q-learning agents that instead must explore their environment and try to discover the underlying model.

The MDP and Pac-Man interfaces that you have copied to your

directory contain many files. Most of these files you can

ignore. The only files you will modify

are: analysis.txt, qlearningAgents.py, and featureExtractors.py.

An MDP describes an environment with observable states and stochastic actions. To experience this for yourself, run Gridworld in manual control mode, and use the arrow keys to move the agent:

python gridworld.py -m

You will see a two-exit environment. The blue dot is the agent. Note that each action you try only has its intended outcome 80% of the time, while 10% of the time it will go off in either of the orthogonal directions.

You can control many aspects of the simulation. A full list of options is available by running:

python gridworld.py -h

Rather than manually controlling the agent as you did above, the default agent moves randomly until it stumbles upon an exit. Try the default agent in another grid world:

python gridworld.py -g MazeGrid

Note: The Gridworld MDP is such that you first must enter

a pre-terminal state (the double boxes shown in the GUI) and then take

the special 'exit' action before the episode actually ends (in the

true terminal state called TERMINAL_STATE, which is not

shown in the GUI).

Look at the console output that accompanies the graphical output

(or use -t for all text). You will be told about

each transition the agent experiences (to turn this off,

use -q).

Positions are represented by (x,y)

Cartesian coordinates and any arrays are indexed

by [x][y], with 'north' being the

direction of increasing y, etc. By default, most

transitions will receive a reward of zero, though you can change

this with the living reward option (-r).

ValueIterationAgent has been defined in the

file valueIterationAgents.py.

The ValueIterationAgent takes an MDP on construction

and runs value iteration for the specified number of iterations before

the constructor returns.

The following command loads the ValueIterationAgent,

which will compute a policy and execute it 10 times. After value

iteration is complete, press a key to start the simulation. You

should find that the value of the start state (V(start))

and the empirical resulting average reward are quite close.

python gridworld.py -a value -i 100 -k 10

The expected value of a state depends on a number of factors including how future rewards are discounted, how noisy the actions are, and how much reward is received on non-exit states. Find appropriate settings for these variables in the following scenarios and record your settings in the analysis.txt file.

BridgeGrid with the default discount of 0.9 and the

default noise of 0.2, the optimal policy does not cross the bridge.

Change only ONE of the discount and noise parameters so that the

optimal policy causes the agent to attempt to cross the bridge.

python gridworld.py -a value -i 100 -g BridgeGrid --discount 0.9 --noise 0.2

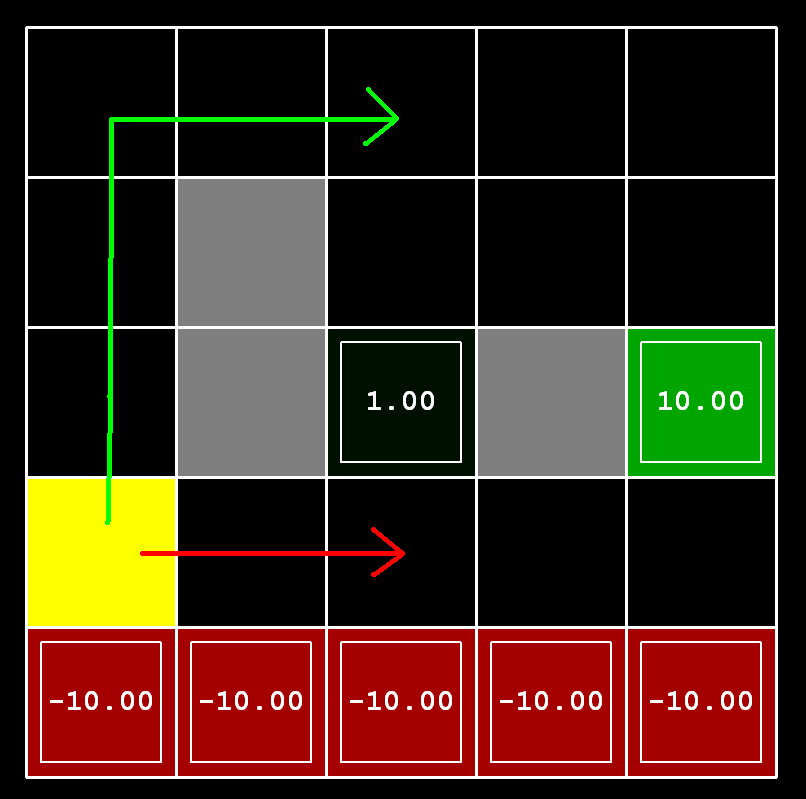

DiscountGrid layout, shown

below. This grid has two terminal states with positive payoff (shown

in green), a close exit with payoff +1 and a distant exit with

payoff +10. The bottom row of the grid consists of terminal states

with negative payoff (shown in red); each state in this "cliff"

region has payoff -10. The starting state is the yellow square. We

distinguish between two types of paths: (1) paths that "risk the

cliff" and travel near the bottom row of the grid; these paths are

shorter but risk earning a large negative payoff, and are

represented by the red arrow in the figure below. (2) paths that

"avoid the cliff" and travel along the top edge of the grid. These

paths are longer but are less likely to incur huge negative

payoffs. These paths are represented by the green arrow in the

figure below.

Give an assignment of parameter values for discount, noise, and

livingReward which produce the following optimal policy types or

state that the policy is impossible or say that it is

not possible. The default corresponds to:

python gridworld.py -a value -i 100 -g DiscountGrid --discount 0.9 --noise 0.2 --livingReward 0.0

You will now write a Q-learning agent that learns a policy for how to behave in the grid worlds you just explored.

This policy will be created from trial and error interactions with

the environment through its update method. A stub of a

Q-learner is specified in the file qlearningAgents.py

in the class QLearningAgent.

This Q-learning agent will maintain a table of its estimated value for all possible state-action combinations that it encounters in the environment. The agent knows the possible actions it can take, but does not know the possible states it will encounter. Any time the agent visits a new state for the first time, you'll need to add this state to the table with estimated values of 0 for all possible actions.

getQValue

computeValueFromQValues

computeActionFromQValues

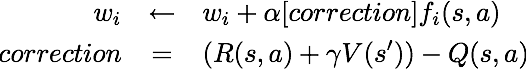

updateQ(s,a) += alpha * ( R(s) + gamma * max[Q(s',a')] - Q(s,a) )Where alpha is the learning rate, gamma is the discount factor, and you take the maximum value you find in the Q-table for the next state s' when considering all possible actions a'.

Note: For

computeActionFromQValues, you should break ties randomly

for better behavior. In a particular state, actions that your

agent hasn't seen before still have a Q-value, specifically a

Q-value of zero, and if all of the actions that your

agent has seen before have a negative Q-value, then an unseen

action may be optimal.

Important: Make sure that you only access Q values by calling

getQValue in your methods. This abstraction will be

useful later when we will override getQValue in

approximate Q-learning to use features of state-action pairs rather

than state-action pairs directly.

python gridworld.py -a q -k 5 -mThe

-k will control the number of episodes your

agent gets to learn. Watch how the agent learns about the state it

was just in, not the one it moves to, and "leaves learning in its

wake."

getAction, meaning it chooses random actions

epsilon of the time, and follows its current best q-values otherwise.

python gridworld.py -a q -k 100Your final q-values should resemble those of the value iteration agent, especially along well-traveled paths. However, your average returns will be lower because of the random actions and the initial learning phase.

python crawler.py

If this doesn't work, you've probably written some code too specific

to the GridWorld problem and you should make it more

general to all MDPs.

Once Q-learning is working on grid worlds and the crawler robot you are ready to move on to Pac-Man.

Familiarize yourself with the Pac-Man interface. Try playing a game of Pac-Man by typing the following at the command line:

python pacman.pyIn this case you are the agent that is controlling Pac-Man. Use the arrow keys to move Pac-Man around in his environment. The smaller white dots represent food. The goal of the game is to eat all of the food while avoiding the ghosts that are trying to thwart Pac-Man. If Pac-Man runs into a ghost, he dies. However, the larger white dots are special capsules that when eaten by Pac-Man make the ghosts safe to contact. The ghosts will only be safe for a short time, while they are colored white, but they eventually become dangerous again.

lab7 directory you'll see that you have a

sub-directory called layouts. When you invoke the pacman

game, by default it chooses the mediumClassic.lay layout.

However you can use command-line arguments to choose other layouts.

For example:

python pacman.py -l smallGridTry other other layouts in the directory. Notice that these layouts are simply text files that follow a convention for designating the locations of walls, food, capsules, ghosts and pacman.

pacman.py supports a number of options that can

each be expressed in a long way (e.g., --layout) or a

short way (e.g., -l). You can see the list of all

options and their default values via:

python pacman.py -h

GameState class which is defined in the

file pacman.py. Open up this file and look through

the options.

It is important to recognize that the game has a number of different agents (Pac-Man and the ghosts). Each agent in the game has a unique index; Pac-Man is always index 0, with ghosts starting at index 1.

Pac-Man can perceive:

In addition, Pac-Man can also determine given the action he chooses

what the next state of the environment will be, by using the

method generatePacmanSuccessor.

The file SimpleAgents.py contains examples of three basic agents that control PacMan:

As you can see in this file, at a minimum, a Pac-Man agent must have a getAction method that takes in a state and returns a valid action.

To test these agents do:

python pacman.py -l openSearch -p RandomAgent python pacman.py -l openSearch -p ReflexAgent python pacman.py -l openSearch -p StateAgent

You will be creating a Pac-Man agent that chooses actions based on Q-learning's assessment of which action is likely to maximize long-term reward.

Time to use Q-learning to play some Pac-Man! Pac-Man will play

games in two phases. In the first phase, training, Pac-Man

will begin to learn about the values of positions and actions.

Because it takes a very long time to learn accurate q-values even for

tiny grids, Pac-Man's training games run in quiet mode by default,

with no GUI (or console) display. Once Pac-Man's training is

complete, he will enter testing mode. When testing,

Pac-Man's self.epsilon and self.alpha will

be set to 0.0, effectively stopping Q-learning and disabling

exploration, in order to allow Pac-Man to exploit his learned policy.

Test games are shown in the GUI by default. Without any code changes

you should be able to run Q-learning Pac-Man for very tiny grids as

follows:

python pacman.py -p PacmanQAgent -x 2000 -n 2010 -l smallGridNote that

PacmanQAgent is already defined for you in

terms of the QLearningAgent you've already

written. PacmanQAgent is only different in that it has

default learning parameters that are more effective for the Pac-Man

problem (epsilon=0.05, alpha=0.2, gamma=0.8).

Your agent should win at least 80% of the games in the last 10 runs.

Hint: If your QLearningAgent works

for gridworld.py and crawler.py but does not

seem to be learning a good policy for Pac-Man

on smallGrid, it may be because

your getAction and/or getPolicy methods do

not in some cases properly consider unseen actions. In particular,

because unseen actions have by definition a Q-value of zero, if all of

the actions that have been seen have negative Q-values, an

unseen action may be optimal.

Note: If you want to experiment with learning parameters,

you can use the option -a, for

example -a epsilon=0.1,alpha=0.3,gamma=0.7. These

values will then be accessible as self.epsilon,

self.gamma and self.alpha inside the agent.

While a total of 2010 games will be played, the

first 2000 games will not be displayed because of the option -x

2000, which designates the first 2000 games for training (no

output). Thus, you will only see Pac-Man play the last 10 of these

games. The number of training games is also passed to your agent as

the option numTraining.

During training, you will see output every 100 games with statistics about how Pac-Man is faring. Epsilon is positive during training, so Pac-Man will play poorly even after having learned a good policy: this is because he occasionally makes a random exploratory move into a ghost.

As a benchmark, it should take about 1,000 games before Pac-Man's rewards for a 100 episode segment becomes positive, reflecting that he's started winning more than losing. By the end of training, it should remain positive and be fairly high (between 100 and 350).

If you want to watch 10 training games to see what's going on, use the command:

python pacman.py -p PacmanQAgent -n 10 -l smallGrid -a numTraining=10

Once Pac-Man is done training, he should win very reliably in test games, since now he is exploiting his learned policy.

However, you'll find that training the same agent on the seemingly

simple mediumGrid may not work well. In our

implementation, Pac-Man's average training rewards remain negative

throughout training. At test time, he plays badly, probably losing

all of his test games. Training will also take a long time, despite

its ineffectiveness.

python pacman.py -p PacmanQAgent -x 2000 -n 2010 -l mediumGrid

Pac-Man fails to win on larger layouts because each board configuration is a separate state with separate q-values. He has no way to generalize that running into a ghost is bad for all positions. Obviously, this approach will not scale. In the next section we will see how to use an approximate version of Q-learning to improve performance on larger layouts.

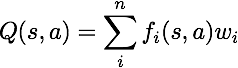

Next you will implement an approximate q-learning agent that learns weights for features of states, where many states might share the same features.

Approximate q-learning assumes the existence of a feature function

f(s,a) over state and action pairs, which yields a vector

f1(s,a) .. fi(s,a) .. fn(s,a) of

feature values. We provide feature functions for you

in featureExtractors.py.

The constructor of the ApproximateQAgent class in the

file qlearningAgents.py includes a

variable self.featureExtractor that is set from the

command-line arguments. To access the features for a given state and

action do:

self.featureExtractor.getFeatures(state,action)This will return a feature vector which is an instance of a

util.Counter object. You can see the implementation of

the Counter class in the util.py file.

A Counter is like a dictionary that maps keys to values, but

with some additional functionality. Any omitted key automatically has

a value of zero. Also you can multiply, add, and subtract

two Counter objects. A feature vector will map feature names

such as 'closest-food' to a number representing the distance

to the closest food pellet from Pac-Man's current position.

In the file qlearningAgents.py, complete the

implementation of the ApproximateQAgent class as follows:

if self.episodesSoFar == self.numTraining:

print "Weights at the end of training"

print self.weights

By default, ApproximateQAgent uses

the IdentityExtractor, which assigns a single feature to

every (state,action) pair. With this feature extractor,

your approximate q-learning agent should work identically

to PacmanQAgent. You can test this with the following

command:

python pacman.py -p ApproximateQAgent -x 2000 -n 2010 -l smallGrid

Important: ApproximateQAgent is a subclass

of QLearningAgent, and it therefore shares several

methods like getAction. Make sure that your methods

in QLearningAgent call getQValue instead of

accessing q-values directly, so that when you

override getQValue in your approximate agent, the new

approximate q-values are used to compute actions.

Once you're confident that your approximate learner works correctly with the identity features, you can try it with the custom feature extractor called SimpleExtractor, which depends on just four features:

Note: In order to keep weights in a reasonable range, all of these features are divided by 10.0.

Your approximate q-learning agent with the SimpleExtractor should learn to win with ease on the mediumGrid:

python pacman.py -p ApproximateQAgent -a extractor=SimpleExtractor -x 50 -n 60 -l mediumGrid

Even much larger layouts, such as the mediumClassic should

be no problem for your ApproximateQAgent

(warning: this may take a few minutes to train).

python pacman.py -p ApproximateQAgent -a extractor=SimpleExtractor -x 50 -n 60 -l mediumClassic

If you have no errors, your approximate q-learning agent should win almost every time with these simple features, even with only 50 training games.

When watching your trained approximate q-learning agent using the SimpleExtractor, you probably noticed that he actively avoids ghosts, even when they are safe to eat. This is because the given feature extractor doesn't distinguish between safe and dangerous ghosts, so ghosts always look dangerous. If you try your approximate q-learning agent on some of the hardest layouts like contestClassic and trickyClassic, the fact that Pac-Man is not seeking out and using the capsules and not eating safe ghosts hurts his performance.

In the file featureExtractors.py make a new extractor class called BetterExtractor that will improve on SimpleExtractor. Remember that you can look in the file pacman.py at the methods in the GameState class, for information you can use in your extractor. You might want to consider incorporating:

Note: Each ghost state maintains a variable called scaredTimer. This timer is 0 when a ghost is dangerous to Pac-Man and greater than 0 when a ghost is safe for Pac-Man to eat.

Test your better extractor on the hardest Pac-Man layouts and see if it fares better than the simple one:

python pacman.py -p ApproximateQAgent -a extractor=BetterExtractor -x 50 -n 60 -l contestClassic

Be sure to write a clear comment with your BetterExtractor explaining what features it is focusing on.

cd ~/cs63/labs/07 git add analysis.txt qlearningAgents.py featureExtractors.py git commit -m "final version" git push